4 main types of artificial intelligence: Explained

How close are we to creating an artificial superintelligence that surpasses the human mind? Though we aren't close, the pace is quickening as we develop more advanced types of AI.

Superintelligent AI could be humanity's last invention. Developing a type of AI that's so sophisticated, it can create AI entities with even greater intelligence could change human-made invention forever. Such entities would surpass human intelligence and reach superhuman achievements.

How close are we to creating AI that surpasses the human mind? The short answer is not very close, but the pace is quickening since the modern field of AI began in the 1950s.

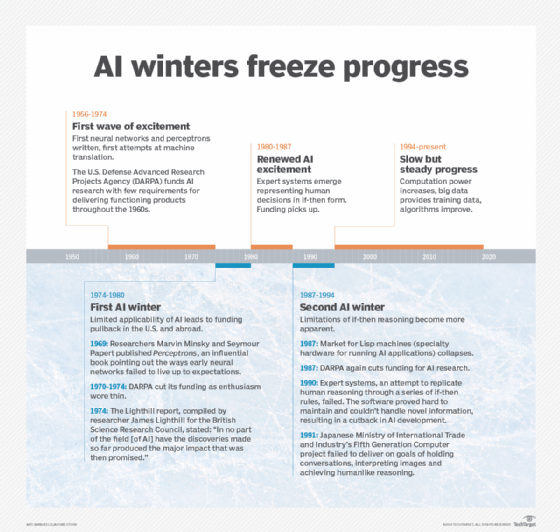

In the 1950s and 1960s, AI advanced dramatically as computer scientists, mathematicians and experts in other fields improved the algorithms and hardware. Despite assertions by AI's pioneers that a thinking machine comparable to the human brain was imminent, the goal proved elusive, and support for the field waned. AI research went through several ups and downs until it surged again around 2012, propelled by the deep learning revolution.

Today, interest in and applications of AI are at an all-time high, with breakthroughs happening every day. Generative AI programs, such as ChatGPT, have created a lot of talk in both the AI community and general social discourse. With the increase of different generative AI models, AI is now a usable tool to create unique text, images and audio that are -- at least initially -- indistinguishable from human-made content. Although many people are excited by this prospect, generative AI has already come under fire for its lack of crediting its source data in the creation of art, and its commercial use has even been partially credited with both writer and actor strikes.

This article is part of

A guide to artificial intelligence in the enterprise

Even though generative AI has developed rapidly in the last few years, it's still a far cry from superintelligent AI. Generative AI is only able to create text, images and audio at near-human quality levels because it's fed an immense amount of data for training. The AI program won't know if the data it's providing to a user is current, just as much as it won't know if it's giving a user accurate advice. In one recent case, ChatGPT created fictitious court cases that a lawyer unknowingly referenced in court.

But what determines the difference between our current generative AI models and superintelligent AI? AI can be categorized based on either capabilities or functionality.

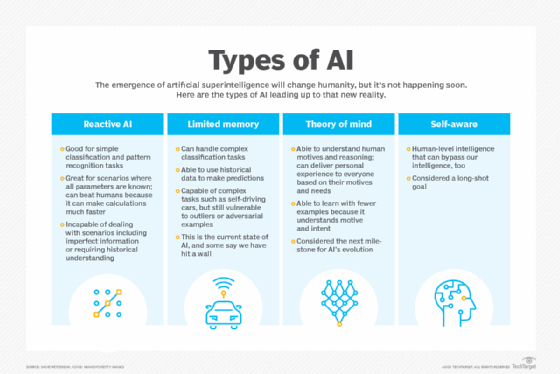

There are four main types of AI that are based on functionality. The first two types belong to a category known as narrow AI, or AI that's trained to perform a specific or limited range of tasks. The second two types have yet to be achieved and belong to a category sometimes called strong AI.

1. Reactive AI

Reactive AI algorithms operate only on present data and have limited capabilities. This type of AI doesn't have any specific functional memory, meaning it can't use previous experiences to inform its present and future actions.

That's the case with many AI and machine learning models. Stemming from statistical math, these models can consider huge chunks of data and produce a seemingly intelligent output.

This kind of AI is known as reactional or reactive AI, and it performs beyond human capacity in certain domains. Most notably, IBM's reactional AI Deep Blue defeated chess grandmaster Garry Kasparov in 1997. This type of AI is also useful for recommendation engines and spam filters.

However, reactive AI is extremely limited. In real life, many of our actions aren't reactive -- in the first place, we might not have all information at hand to react on. Yet humans are masters of anticipation and can prepare for the unexpected, even based on imperfect information. This imperfect information scenario has been one of the target milestones in the evolution of AI and is necessary for a range of use cases, from natural language understanding to self-driving cars.

For that reason, researchers worked to develop the next level of AI, which has the ability to remember and learn.

2. Limited memory machines

Limited memory-based AI can store data from past experiences temporarily.

As mentioned earlier, in 2012, we witnessed the deep learning revolution. Based on our understanding of the brain's inner mechanisms, an algorithm was developed that could imitate the way our neurons connect. One of the characteristics of deep learning is that it gets smarter the more data it's trained on.

Deep learning dramatically improved AI's image recognition capabilities, and soon other kinds of AI algorithms were born, such as deep reinforcement learning. These AI models were much better at absorbing the characteristics of their training data, but more importantly, they were able to improve over time.

One notable example is Google's AlphaStar project, which defeated top professional players at the real-time strategy game StarCraft II. The models were developed to work with imperfect information, and the AI repeatedly played against itself to learn new strategies and perfect its decisions. In StarCraft, a decision a player makes early in the game could have decisive effects later. As such, the AI had to be able to predict the outcome of its actions well in advance.

We witness the same concept in self-driving cars, where the AI must predict the trajectory of nearby cars to avoid collisions. In these systems, the AI is basing its actions on historical data. Needless to say, reactive machines were incapable of dealing with situations like these.

Limited memory AI is also commonly used in chatbots, virtual assistants and natural language processing.

Despite all these advancements, AI still lags behind human intelligence. Most notably, it requires huge amounts of data to learn simple tasks. While the models can be retrained to advance and improve, changes to the environment the AI was trained on would force it into full retraining from scratch. For instance, consider a language: Once we learn a second language, learning a third and fourth become proportionally easier. For AI, it makes no difference.

That's the limitation of narrow AI -- it can become perfect at doing a specific task, but fails miserably with the slightest alterations.

3. Theory of mind

Theory of mind capability refers to the AI machine's ability to attribute mental states to other entities. The term is derived from psychology and requires the AI to infer the motives and intents of entities -- for example, their beliefs, emotions and goals. This type of AI has yet to be developed.

Emotion AI, currently under development, aims to recognize, simulate, monitor and respond appropriately to human emotion by analyzing voice, image and other kinds of data. But this capability, while potentially invaluable in healthcare, customer service, advertising and many other areas, is still far from being an AI possessing theory of mind. The latter isn't only capable of varying its treatment of human beings based on its ability to detect their emotional state -- it's also able to understand them.

Understanding, as it's generally defined, is one of AI's huge barriers. The type of AI that can generate a masterpiece portrait still has no clue what it has painted. It can generate long essays without understanding a word of what it has said. An AI that has reached the theory of mind state would have overcome this limitation.

4. Self-aware AI

The types of AI discussed above are precursors to self-aware or conscious machines -- systems that are aware of their own internal state as well as that of others. This essentially means an AI that's on par with human intelligence and can mimic the same emotions, desires or needs.

This is a very long-shot goal for which we possess neither the algorithms nor the hardware.

Whether artificial general intelligence (AGI) and self-aware AI are correlative remains to be seen in the far future. We still know too little about the human brain to build an artificial one that's nearly as intelligent.

Additional types of AI: Narrow, general and super AI

The fast-evolving nature of AI has resulted in numerous terms for the types of AI that humans have developed and continue to strive to invent. In addition, not everyone agrees on what these terms refer to, contributing to the difficulty of understanding what AI can and can't do.

The following commonly used terms are often associated with the four AI types described above:

- Narrow AI or weak AI. This is the most common type of AI that exists today. It's called narrow AI because it's trained to perform a single or narrow task, often far faster and better than humans can. Weak refers to the fact that the AI doesn't possess human-level general intelligence. Examples of narrow AI include chatbots, autonomous vehicles, Siri and Alexa, as well as recommendation engines.

- Artificial general intelligence. Sometimes referred to as strong AI, AGI is a type of -- as yet unrealized -- multifaceted machine intelligence that can learn and understand as well as a human can. Ideally, this AI could perform tasks as efficiently as a human, and it would have the ability to learn, understand and function similarly to a human.

- Artificial superintelligence. This refers to AI that's self-aware, with cognitive abilities that surpass that of humans. Superintelligent AI would be able to think, reason, learn and make judgments. Artificial superintelligence would be better at everything humans do by a wide margin, as it would have access to a large amount of memory, data processing and analysis.

Learn more about the potential future of AI in the next five years, including the industries it will transform.