ra2 studio - Fotolia

Training data in facial recognition use cases reveals bias

Applications of facial recognition technology show incredible promise in law enforcement and personal privacy, but the risks are holding enterprises back from adoption.

For many industries, facial recognition technology is still an area in AI that's clouded with mystery and mistrust. However, we're beginning to see the integration of facial recognition technology on a fairly regular basis, such as unlocking smartphones, facial biometric access or facial recognition at airports to help board planes faster.

The initial facial recognition use cases are bringing up questions on appropriate use and potential safety risks. In an age where technology improves daily life, the fact remains that not every use case is friendly. In practice, facial recognition technology harbors a potential for abuse that might prove to be disastrous for willing and unwilling consumers. Identifying the positive, neutral and negative applications of facial recognition technology will aid in both research and development of the technology.

Facial recognition technology to enhance convenience

Using facial recognition for law enforcement can be an incredibly helpful assist in public safety. Facial recognition software is making it easier to track, profile and identify individuals in public spaces without having a physical police presence in all locations. Giving law enforcement the ability to specifically track and monitor certain individuals provides a significant improvement to current processes that rely on a combination of human interaction and technology. Facial recognition technology allows law enforcement to keep a watchful eye on suspicious suspects or missing persons, allowing police to identify and track down individuals with greater ease.

Similar facial recognition use cases are allowing customers to walk into stores, grab things off shelves, and walk out of the store without ever waiting in a checkout line. Stores like Amazon Go are using recognition technology to track customer movements throughout the store while identifying items picked up and placed in a shopping bag. Currently, customers need to scan their phone to gain access to the store, but facial recognition technology is developing to identify customers as they enter and exit the store.

Additionally, facial recognition technology is being tested at airports in Atlanta, Detroit, Minneapolis and Salt Lake City. For certain flights, facial recognition technology is being deployed to help check in passengers. Customers using this technology don't have to carry passports or boarding passes, as their face is all they'll need to get checked in, through security and boarded. This technology, in conjunction with police and airport security, can also help identify people on "no-fly" lists, or those who need accommodations.

Applications with inherent risk

Despite the positive applications, there are potential risks that facial recognition technology can bring when it falls into questionable hands. Being able to identify someone by their face is a big risk, particularly if someone is looking for your face for a nefarious purpose.

For example, a hacker could recognize your face through a computer system and use that moment to remotely wipe your computer. This is a point of significant concern for celebrities, politicians, and other highly visible individuals. Further, there are concerns that hackers might use a political candidate's face to stop you from accessing anything pertaining to that candidate.

Law enforcement officers are seeing positive facial recognition use cases, but the field is also laden with potential risk in bias and false identification. Joy Buolamwini, a researcher at the MIT Media Lab, revealed a study that showed that nearly 35% of black women in the study were misidentified by prominent facial recognition systems. If police, authorities, or companies were using this kind of technology, relying on these systems could mean incorrectly identifying certain groups of individuals nearly one third of the time. This could result in arresting the incorrect person, or incorrectly denying access to systems, buildings or locations.

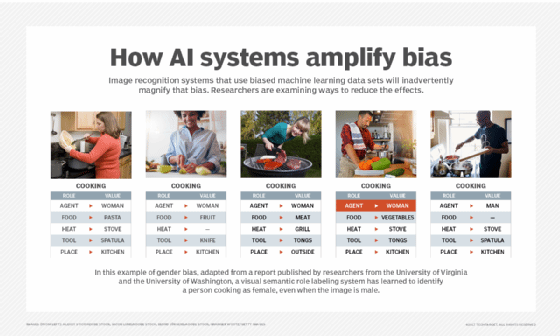

The study noticed rather quickly that these systems were decidedly less accurate with people of color than they were with fairer-skinned images. In this study, it was determined that there was a disparity in the datasets provided to these algorithms. However, what happens when the bias that is introduced in the training dataset is less easy to decipher? Until the technology can be perfected, certain cities, towns and enterprises are beginning to regulate appropriate use and data collection.

The key to better systems: more diverse training data

AI systems are probabilistic, not deterministic, which means they will never provide 100% accuracy in their results. Additionally, bias in training data introduces additional challenges such as AI systems not properly identifying individuals of certain ethnicities, genders, or ages, having issues in lighting or locations, or other issues related to not having enough training data. Additionally, facial recognition technology is not fully tested and largely unregulated, leaving large loopholes regarding how companies go about collecting, storing and using your face as a data asset.

Additionally, image recognition systems from Microsoft, IBM and Mobvii all performed poorly when identifying people of color. The most obvious reason for this is that these facial recognition systems were not trained on a diverse enough set of faces. This is an eye-opening insight into the potential risks of these systems and their vulnerabilities to bias, and only intensifies the need for diverse training sets.

Facial recognition has the ability to provide value and help increase efficiency in our daily lives, but this technology is not without its risks. While some enterprises are more open to the use of this technology than others, as it develops, use cases that are both helpful and prohibitive will emerge. Understanding the capacity for this technology is an effective way to manage any problems. For now, this is one piece of the future that may very well change the face of the world.