Pushing past chatbot challenges will secure their longevity

For chatbots to remain in enterprise futures, developers and data scientists need to get flexible. From open source to intelligent sharing, chatbot collaboration will boost benefits.

For chatbots to complete the shift from novelty assistants to enterprise mainstays, developers need to think about open source options, intelligent interfaces and how to build chatbots with the future of technology in mind, experts say.

Open environment eases integration

Chatbots are often single-source systems that work off proprietary information, but experts say that sharing and integration will be a large part in overcoming some of the most common chatbot challenges today. Chatbots will need to share information across applications, platforms and systems to create collaborative intelligent routing.

Information routed can range from documents and paperwork to user preferences, FAQ's and knowledge bases. Intelligent routing would, for example, connect documents, information and knowledge from HR chatbots to keyword recognition chatbots -- automatically sharing information to optimize knowledge management.

"In the coming months, we expect to see enterprises for an intranet of conversational AI applications that are able to work together seamlessly, sharing information," said Andy Peart, chief marketing and strategy officer of Artificial Solutions, a natural language software company based in Sweden.

"Intelligent routing will allow for the handover process between apps to occur in several different ways, including the ability for a master application or super-bot to deliver it themselves and the ability to prioritize the order in which knowledge resources are delivered," he said.

This is difficult today due to the fact that training data and code development of chatbots are often proprietary.

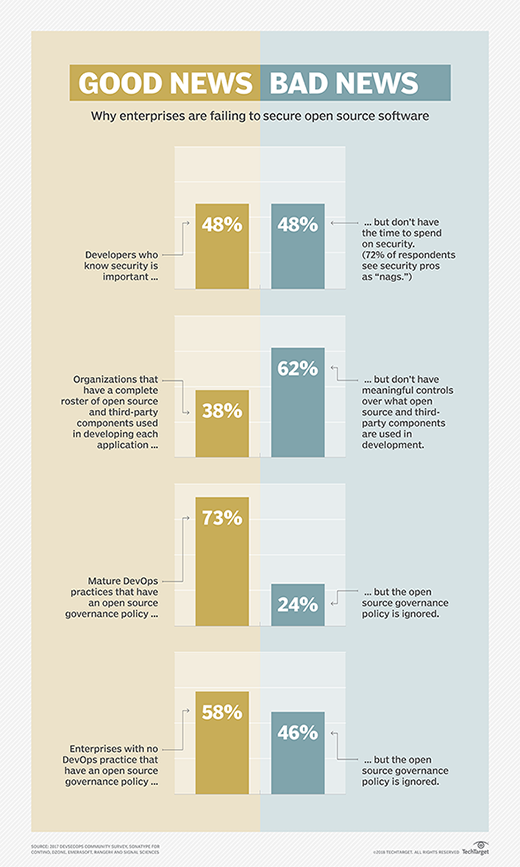

Open source chatbots

AI technologies are mostly proprietary, with many of the cutting-edge technological advancements being spearheaded by a few tech giants. Tim Deeson, CEO and co-founder of London-based chatbot development studio GreenShoot Labs, said he sees open source chatbots as a part of the solution to AI bias, removing financial barriers to AI entry and creating user control, some of the most common chatbot challenges.

"When you don't have any control or insight over the conclusions a system is making, what can often happen is that it replicates or creates biases, giving you no option to examine that bias," Deeson said. "Open source platforms are giving us back that control, and they are allowing us to develop new automation-based solutions."

Peart said he thinks open source tools can help with some other challenges in chatbot development, including collaboration bottlenecks, limited staff and the high cost of development.

"Many of today's development tools require large teams of highly specialized staff to build and maintain their applications. Along with other challenges in actually building an initial application, these issues can make developing chatbots an extremely expensive outlay before enterprises see any benefit," Peart said.

Reducing the financial barrier to allow data scientists to work, create and train chatbots can open the door to faster, more multidirectional development.

Multiuse bots not ready for the enterprise

Companies are beginning to focus on creating bots that can perform multiple jobs. These multiuse bots can find siloed data or create news reports in addition to answering simple questions or performing help desk tasks. While they promise a better experience and less need to develop multiple bots, experts say that simply perfecting single-use chatbots will ultimately deliver better results.

The best chatbots should focus on understanding user intent for a particular domain and performing specific tasks really well, rather than being a general-purpose tool, said Ramesh Hariharan, co-founder and CTO of LatentView Analytics.

Deeson also said that chatbots focusing on single use cases will allow a higher level of autonomy for bots, and enterprises will soon be able to delegate with increasing levels of sophistication.

"We're already moving into the realm of having an agent who works on your behalf or an assistant who understands your goals and motivations," Deeson said.

Focusing on single use cases doesn't necessarily mean only developing and perfecting a chatbot that can route help desk tickets, it can mean creating a customer service chatbot that is limited in scope, but completes multiple, related tasks more intelligently.

Chatbots handling intelligent, related tasks will aid in the maturation of customer service and augmented workforces, said Agustin Huerta, vice president of technology at Globant, a digitally-native technology services company.

NLP and intelligence

There is a push to train chatbots with natural language processing (NLP). And when looking toward the future of chatbots, solving the issues around bots' limited language skills becomes pivotal to what experts perceive as autonomous intelligence.

"As natural language processing gets more and more sophisticated, we will start to see chatbots that appear to be more intelligent, that can understand more complex sentences and conversations and have flexibility," Deeson said.

Peart also noted the enterprise-wide desire for intelligent chatbots that can maintain more natural conversations.

"Conversational AI is becoming a key interface on some digital transformation projects where the ability to work alongside other emerging technology such as [robotic process automation] or augmented reality is seen as a major enabler," Peart said.

The future of chatbots will also rely not only on their intelligent interface that employees and customers interact with, but their intelligent internal processing.

"The more intelligent a chatbot gets, then more nuanced tasks can be accomplished, the faster setup can be achieved, and the less maintenance is required," Huerta said.

The endgame of chatbots

When developing chatbots for the future, working toward an ultimate goal sharpens use cases and guides corporate investment and developer goals. Many experts pointed the end goal of chatbot development toward perfecting its use in the enterprise, from increasing ROI to decreasing customer service help times. Overcoming today's chatbot challenges requires deploying the technology judiciously.

Wai Wong, founder and CEO of Serviceaide, a California-based software company, sees the ultimate goal of chatbots as depending on the enterprise implementing the technology.

"Some organizations will use chatbots to offload mundane and repetitive tasks from their human employees to increase job satisfaction and efficiency -- that's one of the key use cases we see today -- while others will want to use AI to augment the knowledge sharing, collaboration and decision support capabilities of existing teams," Wong said.

The end goal of chatbots will continue to evolve with the technology. As one goal is reached, another will become an imperative need. Deeson urged users to keep in mind that the overarching goal, increased access to services, should drive all research and development.

"Increased access to services through voice and text interfaces means that we're now able to provide simpler and more effective services that can, in many use cases, benefit society," Deeson said.