New uses for GAN technology focus on optimizing existing tech

GAN technology has emerged as the latest facet of AI that can be applied to existing technology to stack learning. What else can it do? Here are emerging use cases for GANs.

Generative adversarial networks first attracted attention through realistically generated audio of Barack Obama saying lyrics to popular pop songs. Beyond the shock value and social media attention, early GAN technology began to focus on simulating voices, videos and realistic text. At the Re•Work Deep Learning Summit in San Francisco, experts explored other ways GAN technology is starting to be implemented.

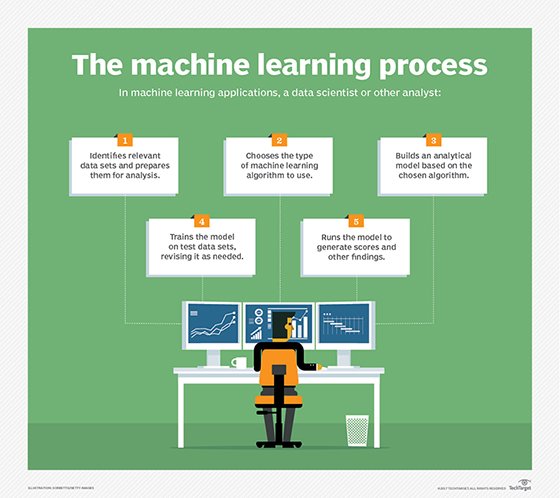

Most machine learning algorithms are focused on learning optimization, Ian Goodfellow, research scientist at Google Brain and a GAN pioneer, noted. While a good approach for training a classifier to have a low error rate, these algorithms sometimes result in finding local optimal models, rather than the best ones.

In contrast, adversarial machine learning is based on game theory, where two algorithms are competing to minimize a value function, while the other is trying to maximize the value function. This method is akin to a game of chess, where one player is trying to maximize her score, while her opponent is trying to minimize the first player's score. The game setting helps identify an equilibrium point between one algorithm building a better classifier and the other building a better simulation. Now, Goodfellow is seeing an explosion of these techniques being applied to different types of problems.

Generative models

The original use of GAN technology focused on generative modeling. For example, generative modeling could be used to create a face with the nose, eyes and mouth in the appropriate place. In this case, the generator algorithm is being optimized to create more realistic-looking faces, and the discriminator is being optimized to improve its ability to detect fake faces.

These applications have advanced by improvements in the use of back propagation functions that help the two algorithms to learn faster with less computational overhead. Over the last couple of years, these algorithms have evolved from being able to generate low-resolution grayscale images to high-resolution color faces, thanks to better algorithms, data sets and GPUs.

This collection on generative adversarial networks includes basic and high-level descriptions of GANs, training strategies and their use cases in the enterprise.

Generative adversarial networks could be most powerful algorithm in AI

Applications of generative adversarial networks hold promise

Training GANs relies on calibrating 2 unstable neural networks

Image translation

Another line of GAN research has explored unsupervised image-to-image translation problems. Translations can generate a set of night images of a freeway from daylight imagery -- improving navigation for autonomous vehicles that need to pilot in day, night, rain or snow. Current image algorithms require supervised learning techniques that involve researchers capturing and labeling data about the same roads in different weather conditions. The promise of GANs is to automate the process of training a generator capable of creating plausible road image sets simulating different driving conditions. This approach could reduce the human work in capturing data from different conditions and better train autonomous driving algorithms.

This same basic approach was applied to translating human poses to new bodies. Researchers at University of California, Berkeley completed a project to translate dance move imagery across bodies. By taking video of one person's dance moves and copying it to another person's image, machine learning was able to replicate motion.

GANufacturing

GAN technology approaches could be adapted to work with 3D data to customize the production of physical products, an area that Goodfellow has dubbed GANufacturing. Researchers are looking at using GANs and 3D printing to build better teeth. Dentists often spend considerable time and effort creating custom dental crowns for each mouth. Not only does the new crown have to fit the 3D shape that works with other teeth, but it also must work well with the pattern of an individual's bite, needing to be tested on a bite cloth or grinded down to fit. By implementing GAN technology that works with 3D data captured from a person's mouth, dentists can generate a near-perfect, realistic and functional tooth that could be printed on site.

Security

Image recognition algorithms may try to reliably identify objects in an image, but using adversaries can generate a small amount of noise in training data that confounds the algorithm. There are some patterns of noise that make it impossible to recognize an intended object. GANs could help simulate these patterns, which could lead to more robust classifiers.

Researchers at New York University and Michigan University have also demonstrated the ability to use GAN technology to generate fingerprints that can simulate the biometrics of a significant percentage of humans. Implications and uses for GAN fingerprints range from genetic studies to skeleton keys for locked phones.

Model-based optimization

Creating better industrial designs involves navigating several tradeoffs that improve a product or process, while minimizing the side effects of design choices. Research in model-based optimization looks at how we might create better model inputs -- perhaps by taking a model of a car and extrapolating that design to create a faster car based on training data. It could also lead to faster chips or better medicine, Goodfellow said.

Building better robots

It takes a lot of time to train robots in the real world, and the system is imperfect. When a robot inevitably makes a mistake, the result can be damaged, expensive equipment. Being able to learn in a simulated world is safer and faster, Goodfellow said.

Researchers are looking at training robots in a virtual environment, which can be deployed to a real environment. GANs can be used to train different versions of the virtual environment that are both economical in terms of compute and data and are also a realistic simulation.

Improving interpretability

New data and privacy regulations are requiring enterprises to explain to consumers why particular decisions involving consumer data were made. The challenge is that, although machine learning can generate better algorithms for a particular metric -- like loan default rate -- it's hard to interpret particular decisions and how directly or indirectly using information like race, religion or sex assisted the process. Goodfellow expects to see a synthesis of GAN and interpretability research that could lead to more robust and interpretable machine learning algorithms.

Learning to learn

The most impressive AI feats to date created systems that can do one thing well but are not generalized to other domains, said Chelsea Finn, research scientist at Google Brain and Berkeley AI Research Lab. Systems that can beat world-class players at backgammon, Jeopardy! or Go are impressive -- but are limited outside their specific function.

Finn has been working on identifying algorithms for teaching AI systems to learn how to learn, taking a note from artificial general intelligence goals. This could improve generality and versatility by stacking on previous experiences to learn new tasks.

Many of the subtasks are relatively simple, but mastering complex tasks is often a result of mastering several simple skills. Finn has started off building a better classifier that can distinguish among object classes in new data using a relatively small set of training data.

"The key idea is we want to train our system across many tasks, so when it sees a new task, it could learn quickly," she said.

Finn's team found some of its more promising results by combining bidirectional GANs (BiGANs) with other algorithms. BiGANs use a generative adversarial framework in which the generator algorithm produces synthetic image data from the real image. Going forward, the team is doing research on enabling robots to learn new tasks from watching humans.