self-driving car (autonomous car or driverless car)

What is a self-driving car?

A self-driving car (sometimes called an autonomous car or driverless car) is a vehicle that uses a combination of sensors, cameras, radar and artificial intelligence (AI) to travel between destinations without a human operator. To qualify as fully autonomous, a vehicle must be able to navigate without human intervention to a predetermined destination over roads that have not been adapted for its use.

Companies developing and/or testing autonomous cars include Audi, BMW, Ford, Google, General Motors, Tesla, Volkswagen and Volvo. Google's test involved a fleet of self-driving cars -- including Toyota Prii and an Audi TT -- navigating over 140,000 miles of California streets and highways.

How self-driving cars work

AI technologies power self-driving car systems. Developers of self-driving cars use vast amounts of data from image recognition systems, along with machine learning and neural networks, to build systems that can drive autonomously.

The neural networks identify patterns in the data, which are fed to the machine learning algorithms. That data includes images from cameras on self-driving cars from which the neural network learns to identify traffic lights, trees, curbs, pedestrians, street signs and other parts of any given driving environment.

For example, Google's self-driving car project, called Waymo, uses a mix of sensors, lidar (light detection and ranging -- a technology similar to RADAR) and cameras and combines all of the data those systems generate to identify everything around the vehicle and predict what those objects might do next. This happens in fractions of a second. Maturity is important for these systems. The more the system drives, the more data it can incorporate into its deep learning algorithms, enabling it to make more nuanced driving choices.

The following outlines how Google Waymo vehicles work:

- The driver (or passenger) sets a destination. The car's software calculates a route.

- A rotating, roof-mounted Lidar sensor monitors a 60-meter range around the car and creates a dynamic three-dimensional (3D) map of the car's current environment.

- A sensor on the left rear wheel monitors sideways movement to detect the car's position relative to the 3D map.

- Radar systems in the front and rear bumpers calculate distances to obstacles.

- AI software in the car is connected to all the sensors and collects input from Google Street View and video cameras inside the car.

- The AI simulates human perceptual and decision-making processes using deep learning and controls actions in driver control systems, such as steering and brakes.

- The car's software consults Google Maps for advance notice of things like landmarks, traffic signs and lights.

- An override function is available to let a human take control of the vehicle.

Cars with self-driving features

Google's Waymo project is an example of a self-driving car that is almost entirely autonomous. It still requires a human driver to be present but only to override the system when necessary. It is not self-driving in the purest sense, but it can drive itself in ideal conditions. It has a high level of autonomy.

Many of the cars available to consumers today have a lower level of autonomy but still have some self-driving features. Self-driving features that are available in many production cars as of 2022 include the following:

- Hands-free steering centers the car without the driver's hands on the wheel. The driver is still required to pay attention.

- Adaptive cruise control (ACC) automatically maintains a selectable distance between the driver's car and the car in front.

- Lane-centering steering intervenes when the driver crosses lane markings by automatically nudging the vehicle toward the opposite lane marking.

Levels of autonomy in self-driving cars

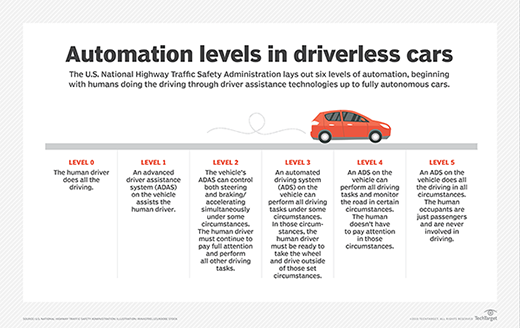

The U.S. National Highway Traffic Safety Administration (NHTSA) lays out six levels of automation, beginning with Level 0 where humans do the driving, through driver assistance technologies up to fully autonomous cars. Here are the five levels that follow Level 0 automation:

- Level 1: An advanced driver assistance system (ADAS) aids the human driver with steering, braking or accelerating, though not simultaneously. An ADAS includes rearview cameras and features like a vibrating seat warning to alert drivers when they drift out of the traveling lane.

- Level 2: An ADAS that can steer and either brake or accelerate simultaneously while the driver remains fully aware behind the wheel and continues to act as the driver.

- Level 3: An automated driving system (ADS) can perform all driving tasks under certain circumstances, such as parking the car. In these circumstances, the human driver must be ready to retake control and is still required to be the main driver of the vehicle.

- Level 4: An ADS can perform all driving tasks and monitor the driving environment in certain circumstances. In those circumstances, the ADS is reliable enough that the human driver needn't pay attention.

- Level 5: The vehicle's ADS acts as a virtual chauffeur and does all the driving in all circumstances. The human occupants are passengers and are never expected to drive the vehicle.

Uses

As of 2022, carmakers have reached Level 4. Manufacturers must clear a variety of technological milestones, and several important issues must be addressed before fully autonomous vehicles can be purchased and used on public roads in the United States. Even though cars with Level 4 autonomy aren't available for public consumption, they are used in other ways.

For example, Google's Waymo partnered with Lyft to offer a fully autonomous commercial ride-sharing service called Waymo One. Riders can hail a self-driving car to bring them to their destination and provide feedback to Waymo. The cars still include a safety driver in case the ADS needs to be overridden. The service is only available in the Metro Phoenix area, San Francisco and most recently Los Angeles as of late 2022 but is looking to expand to more cities.

Autonomous street-sweeping vehicles are also being produced in China's Hunan province, meeting the Level 4 requirements for independently navigating a familiar environment with limited novel situations.

Projections from manufacturers vary on when Level 4 and 5 vehicles will be widely available. A successful Level 5 car must be able to react to novel driving situations as well or better than a human can.

The pros and cons of self-driving cars

The top benefit touted by autonomous vehicle proponents is safety. A U.S. Department of Transportation and NHTSA statistical projection of traffic fatalities for 2017 estimated that 37,150 people died in motor vehicle traffic accidents that year. NHTSA estimated that 94% of serious crashes are due to human error or poor choices, such as drunk or distracted driving. Autonomous cars remove those risk factors from the equation -- though self-driving cars are still vulnerable to other factors, such as mechanical issues, that cause crashes.

If autonomous cars can significantly reduce the number of crashes, the economic benefits could be enormous. Injuries impact economic activity, including $57.6 billion in lost workplace productivity and $594 billion due to loss of life and decreased quality of life due to injuries, according to NHTSA.

In theory, if the roads were mostly occupied by autonomous cars, traffic would flow smoothly, and there would be less traffic congestion. In fully automated cars, the occupants could do productive activities while commuting to work. People who can't drive due to physical limitations could find new independence through autonomous vehicles and would have the opportunity to work in fields that require driving.

Autonomous trucks have been tested in the U.S. and Europe to let drivers use autopilot over long distances, freeing the driver to rest or complete tasks and improving driver safety and fuel efficiency. This initiative, called truck platooning, is powered by ACC, collision avoidance systems and vehicle-to-vehicle communications for cooperative ACC.

The downsides of self-driving technology could be that riding in a vehicle without a driver behind the steering wheel may be unnerving -- at least at first. But as self-driving capabilities become commonplace, human drivers may become overly reliant on the autopilot technology and leave their safety in the hands of automation, even when they should act as backup drivers in case of software failures or mechanical issues.

In one example from March 2018, Tesla's Model X SUV was on autopilot when it crashed into a highway lane divider. The driver's hands were not on the wheel despite visual warnings and an audible warning to put his hands back on the steering wheel, according to the company. Another crash occurred when a Tesla's AI mistook the side of a truck's shiny reflection for the sky.

Self-driving car safety and challenges

Autonomous cars must learn to identify countless objects in the vehicle's path, from branches and litter to animals and people. Other challenges on the road are tunnels that interfere with the GPS, construction projects that cause lane changes or complex decisions, like where to stop to allow emergency vehicles to pass.

The systems need to make instantaneous decisions on when to slow down, swerve or continue acceleration normally. This is a continuing challenge for developers, and there are reports of self-driving cars hesitating and swerving unnecessarily when objects are detected in or near the roadways.

This problem was evident in a fatal accident in March 2018, which involved an autonomous car operated by Uber. The company reported that the vehicle's software identified a pedestrian but deemed it a false positive and failed to swerve to avoid hitting her. This crash caused Toyota to temporarily cease its testing of self-driving cars on public roads, but its testing will continue elsewhere. The Toyota Research Institute is constructing a test facility on a 60-acre site in Michigan to further develop automated vehicle technology.

With crashes also comes the question of liability, and lawmakers have yet to define who is liable when an autonomous car is involved in an accident. There are also serious concerns that the software used to operate autonomous vehicles can be hacked, and automotive companies are working to address cybersecurity risks.

Carmakers are subject to Federal Motor Vehicle Safety Standards, and NHTSA reported that more work must be done for vehicles to meet those standards.

In China, carmakers and regulators are adopting a different strategy to meet standards and make self-driving cars an everyday reality. The Chinese government is beginning to redesign urban landscapes, policy and infrastructure to make the environment more friendly for self-driving cars. This includes writing rules about how humans move around and recruiting mobile network operators to take on a portion of the processing required to give self-driving vehicles the data they need to navigate. "National Test Roads" would be implemented. The autocratic nature of the Chinese government makes this possible, which bypasses the litigious democracy that tests are funneled through in America.

History of self-driving cars

The path toward self-driving cars began with incremental automation features for safety and convenience before the year 2000, with cruise control and antilock brakes. After the turn of the millennium, advanced safety features, including electronic stability control, blind-spot detection, and collision and lane shift warnings, became available in vehicles. Between 2010 and 2016, advanced driver assistance capabilities, such as rearview video cameras, automatic emergency brakes and lane-centering assistance, emerged according to NHTSA.

Since 2016, self-driving cars have moved toward partial autonomy, with features that help drivers stay in their lane, along with ACC technology and the ability to self-park.

Fully automated vehicles are not publicly available yet and may not be for many years. In the U.S., NHTSA provides federal guidance for introducing a new ADS onto public roads. As autonomous car technologies advance, so will the department's guidance.

Self-driving cars are not yet legal on most roads. In June 2011, Nevada became the first jurisdiction in the world to allow driverless cars to be tested on public roadways; California, Florida, Ohio and Washington, D.C., have followed in the years since.

The history of driverless cars goes back much further than that. Leonardo da Vinci designed the first prototype around 1478. Da Vinci's car was designed as a self-propelled robot powered by springs, with programmable steering and the ability to run preset courses.