singularity

What is the singularity?

In technology, the singularity describes a hypothetical future where technology growth is out of control and irreversible. These intelligent and powerful technologies will radically and unpredictably transform our reality.

The word singularity has many different meanings in science and mathematics. It all depends on the context. For example, in natural sciences, singularity describes dynamical systems and social systems where a small change may have an enormous impact.

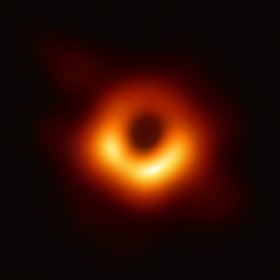

The technological use of singularity took its name from physics. The term first came into popular use in Albert Einstein's 1915 Theory of General Relativity. In the theory, a singularity describes the center of a black hole, a point of infinite density and gravity within which no object inside can ever escape, not even light. The current knowledge of physics breaks down at the singularity and can't describe reality inside of it.

When singularity is used to describe the future, the focus is on a level of extreme unknown and irreversibility. The term is used describe the hypothetical point at which technology -- in particular artificial intelligence (AI) powered by machine learning algorithms -- reaches a superhuman level of intelligence and capability.

This article is part of

A guide to artificial intelligence in the enterprise

What is the singularity in technology?

A singularity in technology would be a situation where computer programs become so advanced that AI transcends human intelligence, potentially erasing the boundary between humanity and computers. The singularity would also involve an increase in technological connectivity with the human body, such as brain-computer interfaces, biological alteration of the brain, brain implants and genetic engineering. Neuro-nanotechnology, such as Elon Musk's experimental brain implant, Neuralink, is perceived as one of the key technologies that will make singularity a reality.

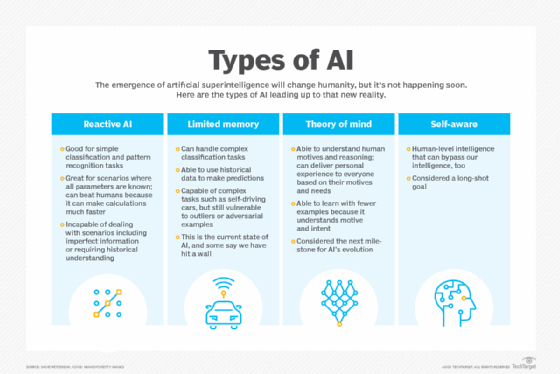

This intelligence explosion would significantly impact human civilization. First, AI would reach human levels of consciousness, intelligence and capabilities, known as artificial general intelligence (AGI). Currently, there are no real-world examples of AGI. Some technologies exhibit human-like capabilities, but they're limited to only one field, such as generative AI programs like ChatGPT. AGI refers to machines that combine multiple capacities together, allowing them to do anything a human can do.

According to the theory of the singularity, after reaching AGI, these computer programs and AI will turn into superintelligent machines with cognitive capacities beyond that of humans. At this point human beings would no longer have control over them.

Machine intelligence superior to the human brain is also known as artificial superintelligence, a highly speculative concept. According to the theory, significant innovations in genetics, nanotechnology, automation and robotics will lay the foundation for singularity during the first half of the 21st century.

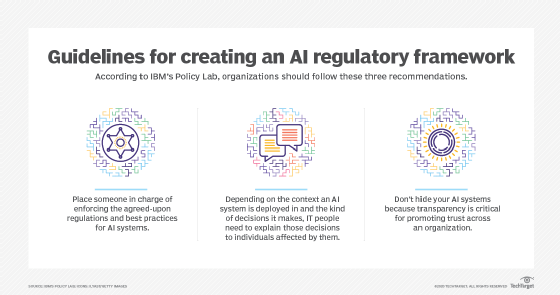

Entrepreneurs and public figures like Elon Musk and Stephen Hawking have expressed concerns over advances in AI leading to human extinction. In 2023, Musk and other AI professionals signed an open letter urging a six-month pause on AI research until regulations and responsible AI have been established.

While the term technical singularity often comes up in AI discussions, technologists and scientists disagree about its meaning. However, many experts agree that there will be a turning point when we witness the emergence of superintelligence. They also agree on crucial aspects of singularity, such as the technological progress of smart systems self-improving at an exponential rate.

History of singularity in technology

John von Neumann, a Hungarian-American mathematician, computer scientist, engineer, physicist and polymath , first discussed the concept of technological singularity early in the 20th century. Since then, many authors have either echoed this viewpoint or adapted it in their science fiction writing.

These sci-fi stories were often apocalyptic, describing a future where a superintelligence upgrades itself and accelerates development at an incomprehensible rate. In this scenario, a machine takes over its own development from its human creator, who lacks the machine's cognitive capabilities. According to singularity theory, self-directed computers could achieve superintelligence by developing at an exponential rate, not incrementally.

Ultimately, a post-singularity world would be unrecognizable. For example, humans could potentially scan their consciousness and store it in a computer. This approach will help humans live eternally as sentient robots or in a virtual world, such as the metaverse.

Important developments in the history of technological singularity include the following:

- 1958. Mathematician John von Neumann mentions the possibility of a technological singularity in conversation with Polish scientist Stanislaw Ulam.

- 1965. Mathematician and computer scientist Irving John Good discusses the idea of an intelligence explosion in his essay "Speculations Concerning the First Ultraintelligent Machine."

- 1986. Science fiction writer Vernor Vinge introduces the concept of the singularity in his novel, Marooned in Realtime.

- 1990s. Computer scientist Ray Kurzweil popularizes the singularity with books such as The Age of Spiritual Machines.

- 2006. The first Singularity Summit is held at Stanford University, focusing on technological singularity, and attended by Kurzweil and entrepreneur Peter Thiel.

- 2008. The Singularity University is founded, an educational think tank focused on addressing the challenges and opportunities of the singularity.

- 2023. Leaders in AI experiments sign an open letter urging a six-month pause on AI research, and the development of ethics and regulations to guide further work.

What is singularity in robotics?

In robotics, a singularity is a configuration where the robot end effector, that is, the part of the robot that interacts with the environment, becomes blocked in some directions. Singularities occur most often in serial robots, which are composed of multiple rigid links.

A serial robot or any six-axis robot arm will have singularities. According to the American National Standards Institute, robot singularities result from the collinear alignment, an arrangement of multiple robotic axes, or joints. When this happens, the result is unpredictable robot motion and velocities. For example, singularity will occur when a misconfigured robot arm causes the robot to get stuck and stop working.

What is singularity in physics?

In physics, gravitational singularity, or space-time singularity, describes a location in space-time, which physicists use to describe the fabric of the universe. According to Albert Einstein's Theory of General Relativity, a space-time singularity is a place where the gravitational field and density of a celestial body become infinite and cannot be described on a coordinated system.

This means that space-time reaches a singular point where all known physical laws cannot apply, and space and time no longer operate as they do in normal reality. The ability to describe what occurs beyond this point has been one of the biggest challenges in the development of modern physics.

There are several different types of singularities. Each singularity has a distinct physical feature with its own characteristics. These characteristics directly relate to the original theories from which they emerged. The two most important types of singularity are conical singularity and curved singularity. Both describe the different theoretical shapes these singularities take.

Conical singularity

A conical singularity is a point where the limit of every general covariance quantity -- meaning the properties designated under the theory of general relativity -- is finite. In this scenario, space-time takes the form of a cone built around this point.

The singularity is found at the tip of the cone. An example of conical singularity is hypothetical cosmic strings, a theorized phenomenon of one-dimensional defects in the fabric of space-time. Some scientists believe these one-dimensional points may have formed during the early days of the universe when it cooled down after its initial rapid expansion.

Curvature singularity

A black hole is the best example of curvature singularity. Black holes are an infinitely dense point where all matter compresses to an infinitely small point with infinite density and zero volume. In this scenario, gravity is infinite, and all concepts of space and time break down.

Black holes have event horizons, which is a radius within which any object or light passing can't escape the black hole's gravity. The velocity required to escape this gravity is beyond the speed of light, a theoretical impossibility. Black holes are invisible and are called black because no light can escape from them. Researchers can only observe and photograph black holes based on the way their gravity warps light around them.

Black holes are often formed by the collapse of a supermassive star. However, there is also what is known as naked singularity. Researchers discovered naked singularity using computer simulations. This type of singularity is visible despite the event horizon and, theoretically, would have existed long before the Big Bang theory of the origin of the universe.

Singularity is a theory based on the advancement of AI. Discover the 7 important benefits of AI for business.